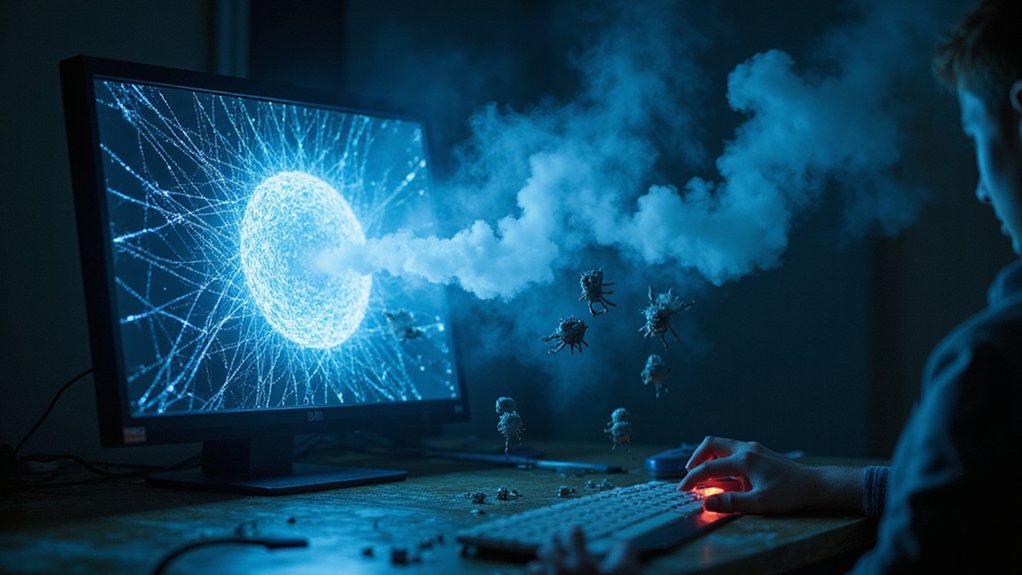

Skepticism is growing as artificial intelligence reshapes our digital world. People are finding it harder to trust what they see online as AI-generated deepfakes and synthetic content become more common. Research shows only 26% of adults trust information produced by AI, while a substantial 68% consider AI content untrustworthy.

The flood of AI-created material is causing widespread disillusionment. Industry experts predict deepfakes will become mainstream by 2026, evolving from simple reputation damage to tools for fraud and manipulation. This shift is eroding the foundation of trust that once existed in digital spaces.

Consumers are responding by treating trust as a prerequisite for engagement. Without credibility, even the most polished execution fails to connect with increasingly skeptical audiences. In this AI-saturated landscape, brands that focus on customer-centered experiences consistently outperform competitors in building loyalty. This trust deficit extends beyond content to the platforms that distribute it.

Trust isn’t optional—it’s the gateway to engagement in an increasingly AI-driven digital landscape.

The problem reaches further than just AI content. Public confidence in institutions and traditional media continues to decline, with people turning to personal networks and curated sources instead. Only 14% of online adults in Australia, the UK, and the US trust AI in high-stakes situations like self-driving cars.

There’s also limited faith in government oversight, with just 55% of adults across 25 countries expressing confidence in their nation’s ability to regulate AI effectively. Meanwhile, 32% express direct distrust in regulatory capabilities.

This “trust apocalypse” creates a troubling psychological effect called the “liar’s dividend,” where authentic content can be dismissed as fake simply because deepfakes exist. Spending on deepfake detection technology is projected to grow by 40% across various industries as organizations scramble to authenticate their content.

As people struggle with information overload, many disengage or retreat to familiar sources, regardless of accuracy. Fear of AI-enabled identity theft (78%) and deceptive political content (74%) is fueling anxiety about all digital interactions. The emergence of AI-powered platforms that can generate sophisticated fake IDs for as little as $15 has further intensified public paranoia about digital verification systems.

Even when content appears legitimate, persistent doubt remains. For many users, the digital landscape has transformed from a space of discovery to one of suspicion.

References

- https://www.forrester.com/blogs/predictions-2026-trust-privacy-how-genai-deepfakes-and-privacy-tech-will-affect-trust-globally/

- https://www.usertesting.com/blog/ai-driven-marketing-trends

- https://observer.com/2025/12/confidential-ai-trust-enterprise-adoption-2026/

- https://www.nu.edu/blog/ai-statistics-trends/

- https://www.intuition.com/ai-stats-every-business-must-know-in-2026/

- https://www.pewresearch.org/2025/10/15/trust-in-own-country-to-regulate-use-of-ai/

- https://www.pwc.com/us/en/tech-effect/ai-analytics/ai-predictions.html

- https://www.nab.org/documents/newsRoom/pressRelease.asp?id=7344

- https://www.onetrust.com/resources/onetrust-2026-predictions-report-into-the-age-of-ai-lessons-from-the-future/