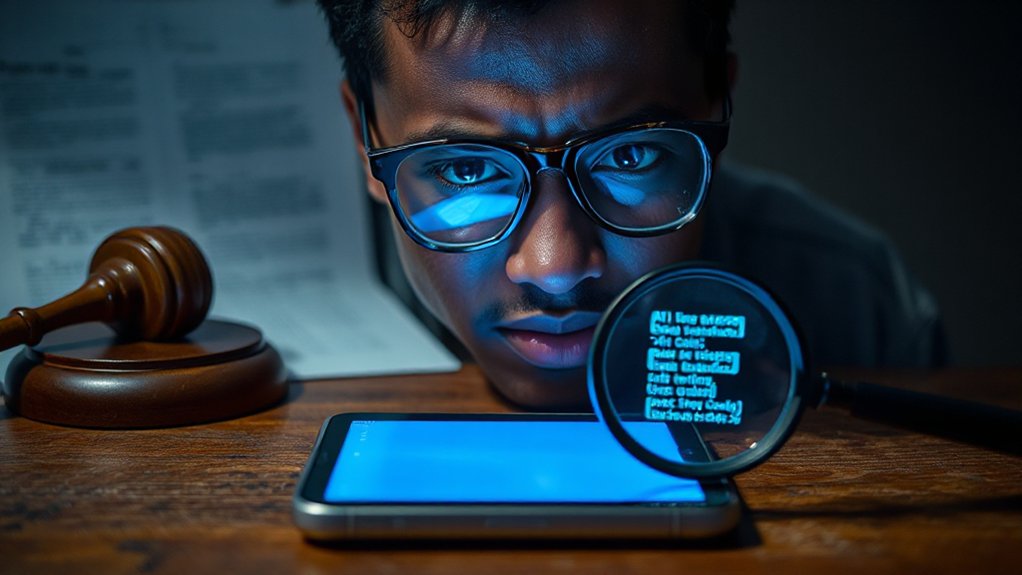

A legal bombshell has hit the AI industry as Federal Judge Ona T. Wang ordered OpenAI to preserve all ChatGPT conversation logs indefinitely. This ruling treats AI chat histories as potential evidence in ongoing copyright litigation, despite OpenAI’s usual data deletion policies. The order applies to all past conversations, even those users have deleted.

The preservation requirement covers not just the text of conversations but also metadata like timestamps and user identifiers. This data can create detailed profiles of user behavior. The order affects all consumer tiers of ChatGPT, with only certain Enterprise API customers exempted due to zero-retention agreements.

Courts have dismissed OpenAI’s privacy concerns, finding that anonymization isn’t enough to protect user identities during legal discovery. This decision aligns with the emerging reality that AI conversations are increasingly being treated as business records subject to preservation and review. Millions of conversations from users uninvolved in the lawsuits are now subject to preservation. This includes up to 20 million chat logs ordered for production as evidence.

Privacy protections fall short as courts mandate preservation of millions of unrelated user conversations for legal evidence.

The ruling creates serious risks for sensitive information shared with AI systems. Attorney-client communications, journalistic sources, and business data could all be exposed. Even with confidentiality orders in place, user chat data must still be produced when demanded by courts. Judge Wang specifically denied OpenAI’s challenge to the preservation order, prioritizing potential evidence over user privacy concerns. This situation reflects broader security vulnerabilities in AI systems that have already led to at least one serious data breach exposing private conversations.

For businesses, this means AI chat histories may become legal business records, discoverable in lawsuits or audits. Companies using ChatGPT for client work, proposals, or strategy now face potential disclosure of those conversations. This reality demands updated policies around AI use.

The order stems from The New York Times Company’s lawsuit against Microsoft and OpenAI for alleged copyright infringement in AI training. Plaintiffs seek these logs to prove whether ChatGPT improperly reproduces copyrighted material from their publications.

This case sets a concerning precedent for AI privacy. Any conversation with an AI system could potentially be subpoenaed in future litigation, regardless of users’ privacy expectations. The indefinite retention may last years or even decades until the copyright cases conclude.

References

- https://quicktakes.loeb.com/post/102kd8y/court-orders-openai-to-retain-all-output-log-data-considerations-for-chatgpt-use

- https://pmg360.com/blog/everything-you-typed-into-chatgpt-may-now-be-legally-preserved

- https://natlawreview.com/article/your-chatgpt-chats-are-about-become-evidence-why-anonymization-wont-save-you

- https://magai.co/openai-court-ordered-data-retention-policy/

- https://ki-ecke.com/insights/openai-chat-log-preservation-order-2025-explained/

- https://openai.com/index/response-to-nyt-data-demands/

- https://www.eve.legal/blogs/what-you-need-to-know-ai-disclosure-rules-in-legal-filings

- https://calmatters.org/economy/technology/2025/09/chatgpt-lawyer-fine-ai-regulation/