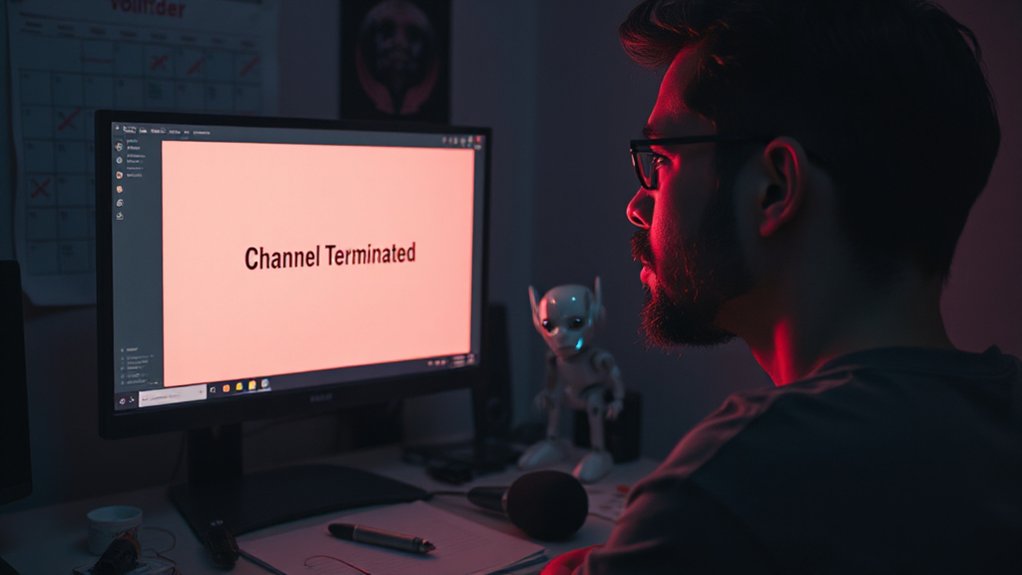

Thousands of YouTube creators face channel terminations each quarter as the platform enforces its Community Guidelines. These bans are part of YouTube’s ongoing efforts to combat spam, deceptive practices, and harmful content across the platform. The company regularly publishes reports that show just how many channels they remove every three months.

YouTube’s policy clearly states that some violations make creators permanently ineligible for return to the platform. These serious offenses include copyright infringement and severe violations such as content that endangers children. For most other cases, creators can appeal termination decisions, but if their appeal is rejected, they typically must wait a full year before submitting another.

The platform has recently clarified its stance on “inauthentic content,” which targets channels that post mass-produced, repetitive, or artificial videos. This update helps creators better understand what might trigger enforcement actions against their channels. The new policy specifically targets content like AI-generated videos and slideshows with minimal commentary. Many users wonder if automated AI systems make most of these decisions without human review. Recent system changes in August 2025 resulted in restricted mode filtering impacting content visibility without clear notifications to creators.

YouTube places responsibility firmly on creators to understand and follow the rules. When channels get terminated, it’s often because they’ve broken these guidelines multiple times or in serious ways. The company says these enforcement actions are necessary to maintain a safe environment for viewers and advertisers alike.

While some creators claim unfair treatment in these mass ban waves, YouTube maintains that its policies are applied consistently. The appeals process exists as a safety net for mistaken terminations, but data shows relatively few terminations get reversed through this system.

As the platform continues to grow, the tension between creator freedom and platform safety remains a challenge. YouTube’s quarterly reports offer transparency into the scale of these enforcement actions, but many creators still feel uncertain about exactly how decisions are made and whether AI algorithms might be making more of these calls than human reviewers.

References

- https://ppc.land/youtube-creators-report-significant-view-drops-following-undisclosed-algorithm-changes/

- https://gyre.pro/blog/youtube-updates-key-summer-changes-for-creators

- https://en.wikipedia.org/wiki/Censorship_of_YouTube

- https://transparencyreport.google.com/youtube-policy?hl=en

- https://www.mediapost.com/publications/article/409808/youtube-gives-banned-creators-a-second-chance.html

- https://www.supertone.ai/en/work/youtube-ai-monetization-policy-2025-eng