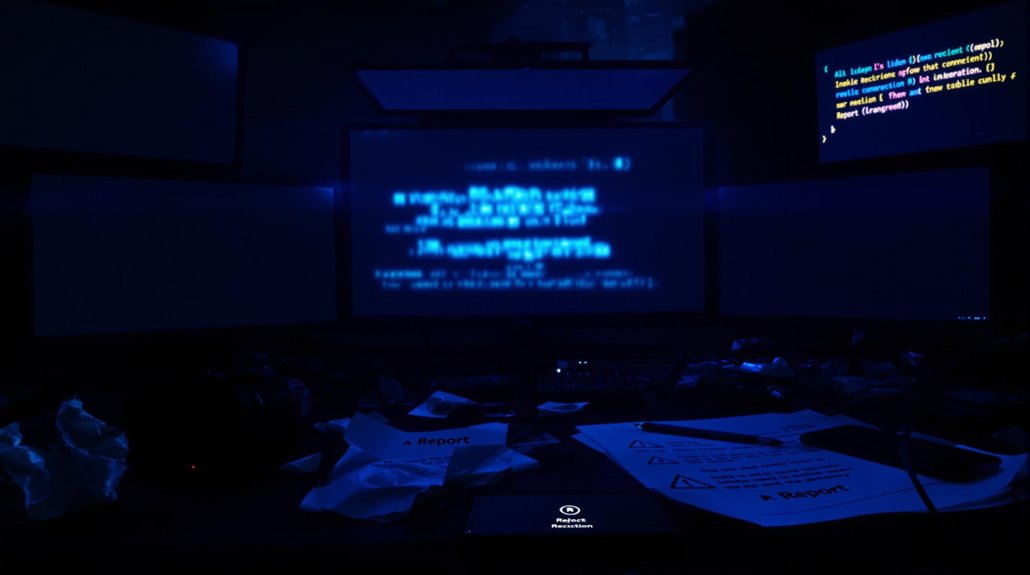

While anyone with a smartphone can now whip up a fake image of their boss doing something embarrassing, the real problem runs deeper than workplace pranks. AI tools like Midjourney, DALL-E 3, and Stable Diffusion have turned image forgery into child’s play. No technical skills needed. Just type what you want and boom—instant fake.

The scary part? These aren’t your grandmother’s badly Photoshopped pictures. Modern AI-generated images fool people 24% of the time, even when they’re looking closely. Give someone just one second to decide, and their accuracy drops to 72%. That’s roughly one in four fake images slipping past human detection. Not great odds when you’re trying to figure out if that viral photo is real.

Modern AI-generated images fool people 24% of the time, even when they’re looking closely.

Simple portraits are the worst offenders. AI nails those. Group photos with multiple people? Those still have telltale signs that give them away. But here’s the twist—some fakes are so obvious that nearly everyone spots them, while the best real photos only get 91% certainty from viewers. People are second-guessing everything now.

The damage spreads like wildfire. Celebrities get hit with explicit deepfakes. Scientists fake research images. The scientific community now faces AI systems that can generate Western blot images from simple text prompts without any actual laboratory experiments. Insurance fraudsters generate fake car damage photos. Someone’s cooking up fake receipts right now, probably. The tools that create this mess are free, fast, and everywhere. Minutes is all it takes to ruin someone’s reputation or spread a lie across social media.

Sure, AI images still mess up sometimes. Weird shadows, mangled hands, text that looks like it was written by a drunk spider. Researchers found that anatomical errors like unrealistic body proportions and extra fingers remain the easiest tells for spotting fakes. But those flaws are disappearing fast. The 2025 models barely make mistakes anymore. AI hallucinations occur in 3-27% of content, making verification increasingly difficult as the technology evolves. Traditional detection methods? Useless. These new fakes share zero pixels with any original image because there isn’t one.

Trust in digital media is circling the drain. News photos, court evidence, your cousin’s vacation pics—everything’s suspect now. Reverse image searches help, but they’re playing catch-up to technology that’s sprinting ahead. The democratization of fake imagery sounds nice until you realize it mostly democratizes harassment, fraud, and lies.

References

- https://medicalxpress.com/news/2025-05-red-flag-ai-generated-fake.html

- https://insight.kellogg.northwestern.edu/article/are-we-any-good-at-spotting-ai-fakes

- https://www.usenix.org/publications/loginonline/democratization-ai-image-generation

- https://www.ciso.inc/blog-posts/spotting-the-nefarious-in-ai/

- https://news.ufl.edu/2025/05/non-consensual-fake-imagery/